Building a Custom Claude Code Statusline

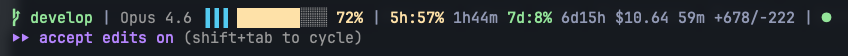

I was watching a YouTube video about someone’s Claude Code setup and noticed their statusline was packed with information - context usage with color-coded bars, plan usage percentages, session timers, weather data, even a memory counter. My statusline was a basic one-liner showing the model name, a monochrome context bar, and a git branch. I wanted some of that, particularly the usage tracking and the visual polish.

What followed was a surprisingly educational detour through the Claude Code ecosystem, three different approaches to usage tracking, and the discovery that the most useful data comes from an API endpoint that barely anyone seems to know about.

Starting with the Basics

The Claude Code statusline is configured through settings.json and runs a shell script that receives JSON on stdin with session data - model info, context window percentage, cost, git status. The output supports ANSI color codes, so you can make it as visual as you want.

The first improvements were straightforward. I replaced the monochrome ▓░ context bar with solid █░ blocks and added color thresholds using the Catppuccin Mocha palette (which matches my terminal theme):

- Green below 50% - plenty of room

- Yellow at 50-79% - getting full

- Red at 80%+ - context compression is coming

I also added the git branch with color coding (red for main, green for develop, mauve for feature branches), session cost, duration, and lines changed. The thinking effort indicator uses three vertical bars matching Claude Code’s own /model picker - blue bars for active effort level, grey for inactive.

None of this required anything beyond the JSON that Claude Code already provides. It’s all in the context_window, cost, model, and cwd fields piped to your statusline script.

The Quest for Usage Data

The piece I really wanted was plan usage - the 5-hour window and weekly utilization percentages. If you type /usage inside Claude Code, you see these numbers. But getting them into a statusline script is a different problem.

Attempt 1: ccusage and Dollar Costs

The first tool I tried was ccusage, an npm package that reads Claude Code’s local JSONL transcript files and calculates costs. It has a blocks --active --json command that returns the current 5-hour window with dollar amounts and projections.

The idea was simple: if you know the cost limit per window for your plan, you can calculate a percentage. The claude-statusline repository - the one from the YouTube video - does exactly this. It estimates Max 5x at $83 per 5-hour window and $500 per week.

The numbers I got: 24% for the 5-hour window, 47% for the weekly. The real numbers from /usage: 40% and 6%.

Not even close. The problem is threefold. The plan limits are guesses - only the Max 20x limits have been verified by the community. The cost accounting from transcript files doesn’t match Anthropic’s internal accounting. And the weekly reset boundary doesn’t necessarily align with ISO weeks.

Attempt 2: Token-Based Counting

The second approach came from claude_monitor_statusline, a Ruby script that reads the same JSONL transcripts but counts tokens and messages instead of dollars. It defines limits like 88k tokens per 5-hour window for Max 5x.

I built a bash version that summed tokens from the last 5 hours across all project transcripts. The raw numbers were interesting - 30k input tokens, 146k output tokens, 3.5M cache creation tokens, 900 messages in one window. But as a percentage of what? The 88k limit is also a guess, and my actual token volumes didn’t line up with it at all.

I showed raw token counts without percentages for a while. Honest, but not very useful at a glance.

The Breakthrough: Anthropic’s OAuth API

Then I found a Python gist by lexfrei that took a completely different approach. Instead of reading local transcripts and guessing limits, it calls Anthropic’s actual usage API at https://api.anthropic.com/api/oauth/usage.

The authentication is elegant - it reads the OAuth token from your macOS Keychain where Claude Code stores its credentials. One HTTP GET request with that token returns the real utilization:

{

"five_hour": {

"utilization": 42.0,

"resets_at": "2026-02-27T18:00:00.408510+00:00"

},

"seven_day": {

"utilization": 7.0,

"resets_at": "2026-03-06T08:00:00.408528+00:00"

}

}

42% five-hour, 7% seven-day. Exactly matching /usage. No guesswork, no estimates, no reverse-engineering from transcript files. Just the actual numbers from Anthropic’s servers.

The same gist also checks status.claude.com for platform health - useful context when things feel slow.

The Final Implementation

The statusline ended up as two files. A bash script (statusline.sh) that handles all the formatting and a Python script (statusline-usage.py) that fetches the API data with caching.

Here’s what the final statusline looks like:

Left to right:

- Git branch with color coding (red for main, green for develop)

- Model name as reported by Claude Code

- Thinking effort - three vertical bars, blue for active level, grey for inactive

- Context bar - 10-character progress bar, color shifts at 50% and 80%

- 5-hour usage - real percentage from the API with time until reset

- 7-day usage - same, with reset countdown

- Session stats - cost, duration, lines added/removed (dimmed)

- Platform health - green dot when operational, red when degraded

The Python script caches successful API responses for 5 minutes and uses a file-based lock to prevent concurrent fetches. The bash script caches the Python output separately with a 60-second TTL and refreshes in the background. Two layers of caching means the statusline always returns instantly - more on why this matters below.

statusline-usage.py (click to expand)

#!/usr/bin/env python3

"""Fetch Claude Code plan usage from Anthropic's OAuth API."""

import getpass, json, os, subprocess, sys, time

from datetime import datetime, timezone

from pathlib import Path

from urllib.error import URLError

from urllib.request import Request, urlopen

CACHE_DIR = Path.home() / ".claude" / ".statusline-cache"

CACHE_PATH = CACHE_DIR / "usage-raw.json"

CACHE_TTL = 300 # 5 minutes

USAGE_API_URL = "https://api.anthropic.com/api/oauth/usage"

STATUS_API_URL = "https://status.claude.com/api/v2/status.json"

STATUS_CACHE_PATH = CACHE_DIR / "status-raw.json"

STATUS_CACHE_TTL = 120

LOCK_PATH = CACHE_DIR / ".fetch.lock"

def read_cache(path, ttl):

try:

if time.time() - path.stat().st_mtime < ttl:

return path.read_text()

except (FileNotFoundError, OSError): pass

return None

def read_cache_any_age(path):

"""Read cache regardless of age — for stale-while-error fallback."""

try: return path.read_text()

except (FileNotFoundError, OSError): return None

def write_cache(path, content):

path.parent.mkdir(parents=True, exist_ok=True)

tmp = path.with_suffix(".tmp")

tmp.write_text(content)

tmp.rename(path)

def try_acquire_lock():

"""Prevent concurrent API calls from dozens of sessions."""

try:

LOCK_PATH.parent.mkdir(parents=True, exist_ok=True)

if LOCK_PATH.exists() and time.time() - LOCK_PATH.stat().st_mtime < 30:

return False

LOCK_PATH.write_text(str(os.getpid()))

return True

except OSError: return True

def release_lock():

try: LOCK_PATH.unlink(missing_ok=True)

except OSError: pass

def get_oauth_token():

try:

result = subprocess.run(

["security", "find-generic-password",

"-s", "Claude Code-credentials",

"-a", getpass.getuser(), "-w"],

capture_output=True, text=True, timeout=5)

if result.returncode != 0: return None

creds = json.loads(result.stdout.strip())

return creds["claudeAiOauth"]["accessToken"] or None

except: return None

def http_get(url, headers=None):

req = Request(url, method="GET")

for k, v in (headers or {}).items(): req.add_header(k, v)

try:

with urlopen(req, timeout=5) as resp:

return resp.read().decode()

except (URLError, OSError, TimeoutError): return None

def parse_window(data, total_minutes):

util, resets_raw = data.get("utilization"), data.get("resets_at")

if util is None or resets_raw is None: return None

try:

resets_raw = resets_raw.strip()

if resets_raw.endswith("Z"): resets_raw = resets_raw[:-1] + "+00:00"

resets_at = datetime.fromisoformat(resets_raw)

if resets_at.tzinfo is None:

resets_at = resets_at.replace(tzinfo=timezone.utc)

remaining = max(

int((resets_at - datetime.now(timezone.utc)).total_seconds()) // 60, 0)

except (ValueError, TypeError): remaining = 0

return {"pct": round(float(util)), "remaining_min": remaining}

def parse_api_body(body):

try: data = json.loads(body)

except (json.JSONDecodeError, ValueError): return None

if isinstance(data.get("error"), dict): return None

result = {}

for key, mins in [("five_hour", 300), ("seven_day", 10080)]:

w = data.get(key)

if isinstance(w, dict): result[key] = parse_window(w, mins)

return result if result else None

def fetch_usage():

# Return fresh cache if available

cached = read_cache(CACHE_PATH, CACHE_TTL)

if cached is not None:

parsed = parse_api_body(cached)

if parsed is not None: return parsed

# Another session is already fetching — return stale data

if not try_acquire_lock():

stale = read_cache_any_age(CACHE_PATH)

if stale:

parsed = parse_api_body(stale)

if parsed is not None: return parsed

return {"error": "fetching"}

try:

token = get_oauth_token()

if not token:

stale = read_cache_any_age(CACHE_PATH)

if stale:

parsed = parse_api_body(stale)

if parsed is not None:

parsed["_stale"] = True

return parsed

return {"error": "no_token"}

body = http_get(USAGE_API_URL, headers={

"Authorization": f"Bearer {token}",

"anthropic-beta": "oauth-2025-04-20",

})

if body:

parsed = parse_api_body(body)

if parsed is not None:

write_cache(CACHE_PATH, body)

return parsed

# API failed — return stale data rather than showing error

stale = read_cache_any_age(CACHE_PATH)

if stale:

parsed = parse_api_body(stale)

if parsed is not None:

parsed["_stale"] = True

return parsed

return {"error": "api_fail"}

finally:

release_lock()

def fetch_status():

cached = read_cache(STATUS_CACHE_PATH, STATUS_CACHE_TTL)

if cached is not None: return cached.strip()

body = http_get(STATUS_API_URL)

if not body:

write_cache(STATUS_CACHE_PATH, "")

return ""

try:

indicator = json.loads(body).get("status",{}).get("indicator","none")

except: indicator = "none"

status_map = {"minor":"degraded","major":"major_outage","critical":"critical_outage"}

result = status_map.get(indicator, "")

write_cache(STATUS_CACHE_PATH, result)

return result

output = fetch_usage()

status = fetch_status()

if status: output["platform_status"] = status

json.dump(output, sys.stdout)

Design Choices

Catppuccin Mocha everywhere. I use Catppuccin across my terminal, editor, and prompt (Starship). Using the same palette in the statusline means everything feels cohesive. The specific colors - green (#a6e3a1), yellow (#f9e2af), red (#f38ba8) - are instantly recognizable as severity levels without needing to read numbers.

Symbols over text. The thinking effort started as [think:default] - functional but noisy. The three-bar indicator communicates the same information in three characters and matches what you see in Claude Code’s own /model picker. Platform status is a colored dot, not a word. Less visual clutter means the important numbers stand out.

Branch first. Git branch is the most common context switch indicator. Putting it first means you always know which codebase you’re affecting without scanning.

Background caching. The Python API call takes about 200ms. The JSONL transcript scanning (when I was using that approach) took 250ms. Either would add noticeable lag if run synchronously on every statusline update. Background refresh with atomic file writes (tmp + mv) means you always get a fast read from cache. Good enough for a statusline.

Real data over estimates. This was the biggest lesson. The community has built multiple tools for estimating Claude Code usage from local data - ccusage for costs, various scripts for tokens. They’re all wrong because they’re working from incomplete information with guessed limits. The OAuth API returns the actual utilization percentage that Anthropic uses internally. If the data source exists, use it.

When It Breaks: Scaling to Many Sessions

Updated March 2026.

A couple of weeks after the initial implementation, the usage display started failing intermittently. The statusline would show !api_fail in red where the percentages should be. Running /login inside Claude Code fixed it every time, but it kept coming back.

The root cause turned out to be OAuth token expiry. Claude Code stores an OAuth access token in the macOS Keychain. The statusline script reads that token directly and hits the API with it. But OAuth tokens expire - and the statusline script has no ability to refresh them. Only Claude Code’s main process can do that. When the token goes stale between refreshes, the API returns a 401, the script sees a failure, and it dutifully displays the error.

This is annoying but tolerable with one or two sessions. With a dozen or more running in parallel - which is my normal setup during heavy development - it gets worse. Every session’s statusline independently spawns a background Python process to refresh the cache. All of them read the same expired token from Keychain, all of them hit the API, all of them fail, and all of them race to write {"error": "api_fail"} to the same cache file.

Three changes fixed this:

Stale-while-error. The original script cached errors the same way it cached successes. If the API failed, you got a red error for 60 seconds until the next refresh attempt. Now the script only caches successful API responses. When a fetch fails, it returns the last good data instead, marked with a _stale flag. The statusline shows a dim ~ prefix on the usage numbers so you know the data isn’t fresh, but you still see useful percentages instead of an error.

Longer cache TTL. Usage data doesn’t change rapidly. The utilization percentage moves in response to your actual API usage over a 5-hour or 7-day window. Checking every 60 seconds was wasteful. The API cache now has a 5-minute TTL. With 15 sessions, that’s the difference between 900 API calls per hour and 12.

Cross-session locking. A simple file-based lock prevents concurrent API calls. If one session is already fetching, others return stale data rather than dogpiling the API. The lock auto-expires after 30 seconds to handle crashed processes.

The net effect: one API call per 5 minutes regardless of how many sessions are open, and token expiry is invisible as long as there’s any prior successful response cached. You still need to /login eventually to get fresh data, but you’re no longer staring at a red error message while you do it.

The Takeaway

Claude Code’s statusline is one of those features that looks minor but rewards investment. A well-configured statusline reduces cognitive load - instead of running /usage manually, the information is just there. And the customization surface is surprisingly deep once you start exploring what data is available.

The community tooling around Claude Code is growing fast. The most useful solution I found - the OAuth usage API - came from a GitHub gist with three stars, not from official documentation. If you’re building your own statusline, start with the reliable data (the JSON input), add the API usage data for plan tracking, and don’t bother with transcript-based estimates unless you enjoy calibrating inaccurate numbers.

The full source for both files is in the code blocks above. Drop them in ~/.claude/, configure statusLine in your settings.json, and you have accurate usage tracking in your statusline.